By using these tools, the user describes the syntax of the language to be recognized, and the tool generates a parser for the specified language. The importance of parsing in computer science has implied the existence of different tools aimed at the automatic generation of parsers. #Online execution ex and yacc compiler softwareParsers are used for different tasks in computer science such as performing machine-level translation of programs ( e.g., (de)compilers, transpilers, and (dis)assemblers), creating software development tools ( e.g., profilers, debuggers, and linkers), natural language processing ( e.g., dependency and constituency parsing), and data format processing ( e.g., JSON, GraphViz DOT, and DNS zone files). Parsers are software components that, using a lexer to recognize the terminal symbols of a given language, analyze an input, check its correct syntax, and build a tree that represents the input program with a hierarchical data structure. Lexical analyzers are commonly called lexers or scanners. The process of recognizing the terminal symbols, also called tokens, from a sequence of characters is called lexical analysis. Such a grammar may describe a natural language ( e.g., English or French), a computer programming language, or even a data format. Parsing-also known as syntax or syntactic analysis-is the process of analyzing a string of terminal symbols conforming to the rules of a formal grammar. Under the context of the academic study conducted, ANTLR has shown significant differences for most of the empirical features measured.

Two random groups of students implement the same language using a different parser generator, and we statistically compare their performance with different measures. In this article, we present such an empirical study by conducting an experiment with undergraduate students of a Software Engineering degree.

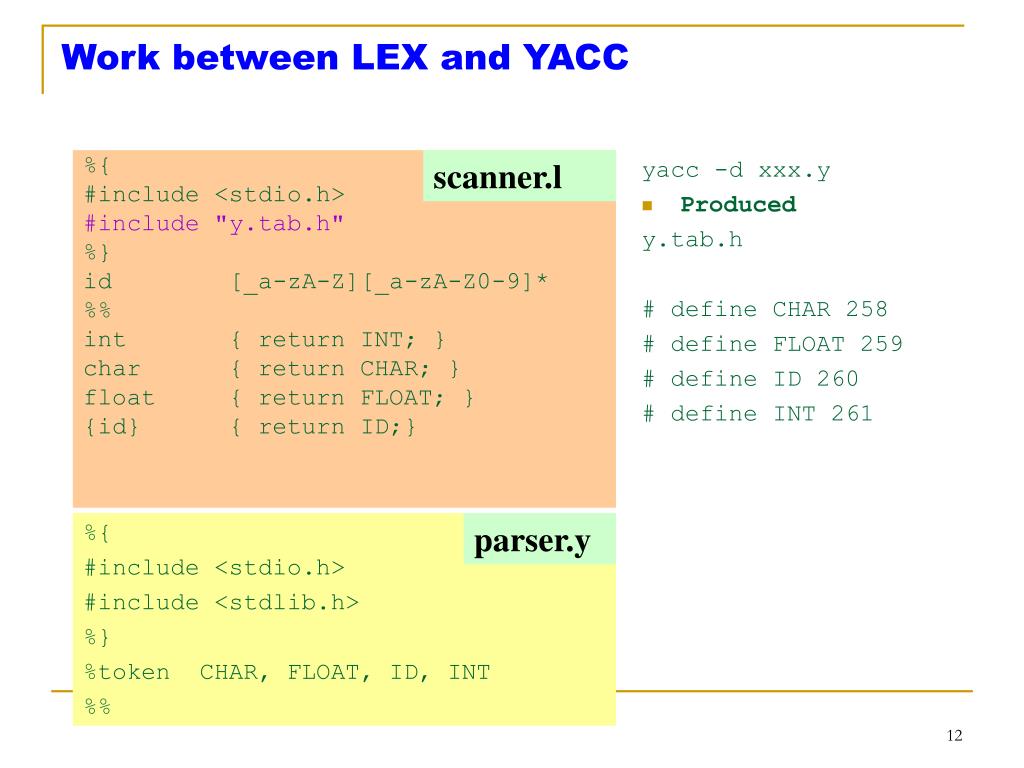

There exist different qualitative comparisons of the features provided by both approaches, but no study evaluates empirical features such as language implementor productivity and tool simplicity, intuitiveness, and maintainability. Even though ANTLR provides more advanced features, Lex/Yacc is still the preferred choice in many university courses. Two of the most common parser generation tools are the classic Lex/Yacc and ANTLR. Due to the widespread usage of parsers, there exist different tools aimed at automizing their generation. Parsers are used in different software development scenarios such as compiler construction, data format processing, machine-level translation, and natural language processing.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed